While I pay for multiple video streaming services, I never got on board with streaming music services such as Amazon Prime Music or Spotify. I’ve curated quite the collection of MP3s in the past twenty years, with a fair number of tracks that aren’t available through streaming services. Since most phone manufactures have stolen our beloved SD card expansion slots, I’ve had to rely on various services the past few years to enjoy my music on the go.

This started with Amazon Music, when there was an option to pay for a given amount of storage that you could upload your music collection to and enjoy through the Amazon Music app on your mobile devices. Then came Google Play Music with a similar service, but then Google did what Google does and killed that product in favor of Youtube Music. Ugh.

I’ve since moved on to hosting a Plex server on a Raspberry Pi in my home’s network rack. Rather than loading my substantial music collection on the same SD card that the operating system runs on, I’ve opted to connect a 2.5” SSD with a bountiful amount of storage to the Raspberry Pi via USB. From here, I’m able to stream my music anywhere I have an internet connection through the surprisingly great Plexamp app.

I’ve got a bad habit of sometimes (almost always) only dropping new music onto the Plex server and skipping over my NAS, so I’ve finally decided to sit down and back the music directory to my NAS. While working on this script, I realized there were a number of small devices and servers around my home that would also benefit from the occasional backup to help make my life easier in the event of hardware failure.

These aren’t production servers in a corporate environment, so I don’t need full snapshots of what I’m backing up. I realistically only need the latest changes to a handful of directories to occasionally be backed up to my NAS, which rsync is perfect for. rsync will compare the modification times and sizes of files during a copy or synchronization process, ensuring much faster speeds than attempting to copy a full directory over any media type — only differences create the need for a CRUD operation.

Prerequisites

The local system, where this script will run, will require a few software packages in order for the script to run. rsync is obviously first and foremost, but the mailutils package is also needed for the “mail” command. OpenSSL comes installed by default with most distributions, but if not, this is required as well for setting up authentication from the local system to the remote server.

On Debian-based systems, all of these packages can be installed through apt:

apt-get install openssl mailutils rsync

Chances are these packages are already installed.

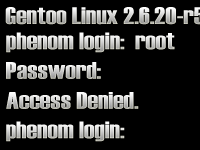

Configuring Passwordless SSH

While rsync can run over a network or mounted filesystem, I prefer to use it over SSH for the encryption and ability to easily swap out remote paths if I decided to back up to a server outside of my home or office. This script is going to run on a regular schedule through the system’s crontab, so authentication will be automated with a public-private keypair rather than storing the remote system’s password on the local system.

Chances are the local system already has a unique public key that can be used to this purpose in the local user’s .ssh directory in their home folder, typically ~/.ssh/id_rsa.pub (and the private key, ~/.ssh/id_rsa

In the event this key doesn’t exist, a new key can be quickly created using the ssh-keygen command and following the simple prompts:

ssh-keygen

I’ll leave the password fields blank, as again I don’t want to store any credentials on the filesystem.

Next, the local system’s public key needs to be copied to the remote server or NAS. The contents of the public key should be placed in the .ssh/authorized_keys file in the home directory of the remote user who will own the files being copied. In my case, I’m copying the files to my QNAP NAS. OpenSSL provides a command that can do this in one fell swoop:

ssh-copy-id -i ~/.ssh/id_rsa.pub [email protected]

The local user should now be able to connect to the remote server via SSH without the need for a password. The simple test is:

ssh [email protected]

If this command is successful, then the key-based authentication has been configured correctly. Otherwise, the log file /var/log/secure on the remote server should provide an indication of what went wrong. In my experience, any issues are usually related to the ownership and permissions on the .ssh folder on one of the systems.

Creating a Backup Script

Realistically, the backup I needed can be done in a single line and easily placed in the local system’s crontab:

rsync -avzP rogress /home/myuser/backup_this_folder/ [email protected]:/backups/

The above command would make a carbon copy of /home/myuser/backup_this_folder on my NAS in the /backups/ folder.

The -avzP switches enable archive mode (a), verbose output (v), compressions (z), partial files, and shows progress (P). The verbose output and progress aren’t necessary when running this script through crontab, but for testing purposes these switches are helpful. The output of the command could also be output to a log file if desired.

But what if I want to backup a handful of directories and perhaps get an e-mail notification in the event of a failure? One option would be to copy and paste the command above and make adjustments for each directory I want to back up, but a single script would make things simpler and easier to maintain moving forward.

#!/bin/bashREMOTE_USER=adminREMOTE_SERVER="192.168.1.xyz"REMOTE_PATH=/share/storage/pi/LOCAL_DIRS=(/mnt/usb/ /home/pi/)EMAIL="[email protected]"# copy each local directory to the remote pathfor LOCAL_DIR in "${LOCAL_DIRS[@]}" do rsync -avz --progress $LOCAL_DIR $REMOTE_USER@$REMOTE_SERVER:$REMOTE_PATH if [ $? -ne 0 ]; then ERROR=1 fi done# Only send an e-mail if it is setif [ -n "$EMAIL" ]; then if [ $ERROR ]; then mail -s "rsync backup error for $REMOTE_PATH" $EMAIL <<< 'Failure' else mail -s "rsync backup complete for $REMOTE_ATH" $EMAIL <<< 'Success' fifi

With this script, I can easily change out settings and use the script across multiple systems. REMOTE_USER, REMOTE_SERVER, and REMOTE_PATH can all be adjusted to where I want my backups to be stored, and LOCAL_DIRS will allow me to specify multiple directories to be backed up on the local system.

The EMAIL variable, if set, will trigger the sending of an e-mail from the local system alerting the recipient of a success or failure of the backup job. Again, per the prerequisites section, this is dependent on having a properly configured mail transfer agent on the local system.

Scheduling the Script with crontab

Realistically I’m only adding music to my collection once or twice a month, however some of the other directories I may choose to backup may benefit from daily backups or other even twice-daily backups depending on how often files may change. I’ll set up the script in the crontab file of a user with access to all the directories being backed up:

$ crontab -eRunning the script every day at midnight fits my needs:

0 0 * * * /home/bioslevel/scripts/backups.shSaving the changes will automatically reload the crontab and enable the job.

Conclusion

This script gives me a very simple way to backup multiple directories on a given system to a remote system over SSH. SSH provides encrypted communications between the local system and remote server, which is particularly important if the backups are being performed on a public network or over the internet. The real star of the solution is rsync, which compares file sizes and modification times to determine what files need to be copied and provides compression to increase transfer rates.

rsync is capable of so much more than what it is being used for here, such as keeping directories in sync across multiple systems, but lends plenty of power to my backups. Even running on Raspberry Pi 4, backups are surprisingly fast from a solid state drive and gigabit connection.